|

Using a state of the art, high performance N8 High Performance Computing (HPC) system, impressive 3D modelling technology, and some handy machine learning thrown in for good measure, researchers from Manchester University’s School of Earth and Environmental Sciences set out to bring the prehistoric “tyrant king” to digital life. This led to some new information regarding its biomechanics. Short version: Steven Spielberg lied to us.

There were two main aspects to Manchester University’s study. The first was digitizing the dinosaur and creating a 3D simulation in the computer. This is something Sellers’ team has been working on for a number of years. Way back in 2013, Sellers used then-new laser-scanning techniques and an advanced computer modeling system to recreate the Argentinosaurus, a friendlier, herbivorous dinosaur that measured a whopping 131 feet and weighed as much as 80 tons. The second part of the T. Rex study is the really groundbreaking bit, though. This is where the machine learning comes in, which was used to generate the necessary locomotion calculations and incorporate myriad hard and soft constraints in the simulation. “[I utilized] completely unsupervised learning, using a goal criteria of ‘Get from A to B as fast as possible,’ and given enough computer time it can manage that all on its own,” Sellers explained. “This gets around the problem of just getting out what you put in. No one knows how T. Rex, moved but at least this way you have an objective optimization criteria to justify the choices made. It also means that it is relatively easy to add in extra constraints like ‘don’t break any bones.’ It’s basically a multi-physics, multi-objective simulation system.” In the conclusions reached by the researchers, the T. Rex could not run due to its size and weight. This means it would have been unable to pursue prey at high speeds, and even its regular walking speed would have been hampered due to its unwieldy skeleton. Click here to read the full article.

0 Comments

NFL Digital Media has relaunched NFL.com as a part of a league-wide digital transformation. In a statement, the NFL says that today's relaunch is "just the beginning" of its digital transformation that will extend to streaming and social updates throughout the 2017-2018 season. Why it matters: Jennifer Leung, Director of Product at NFL Digital Media, says the transformation seeks to accomplish two main goals: Revamp the fan experience: Use better measurement, analytics and search/discovery tools to connect with fans, and deliver more fan-based, team-based content. Make it easier to access live games and game highlights: Provide simpler access to livestreams on the site and on mobile and work with internet providers on streamlining packages so subscribers can easily access content anywhere. Go deeper: Leung says reconciling distribution rights is "the biggest challenge" for the League digitally right now, which makes sense given that the landscape is shifting quickly from traditional linear TV to digital. Still, their focus is on streamlining the fan experience, to ensure viewers can watch and follow games on any device. Click here to read the full article. Developed by AMP Robotics, this robot makes use of artificial intelligence to recognize and sort food and beverage containers. Clarke has already been deployed in a municipal waste facility in Denver, Colorado, where it helps out with the trash-sorting system. Using a visible-light camera, it can spot milk, juice, and food cartons and pull them out using its robotic arm and suction cups. These items are then diverted away from the landfill, and sent instead to the appropriate recycling facility.

With a reliable rate of 60 items a minute, Clarke picks up recyclable waste with 90-percent accuracy and is about 50 percent faster than a human doing the same job. Ultimately, that results in a 50-percent reduction in sorting costs. “The fundamental platform that we’ve created was a system to sort pretty much all the commodities that are in a recycling facility today,” AMP Robotics founder Mantanya Horowitz told Engadget, “Whether it’s cardboard, No. 1 plastics, No. 2 plastics, or cartons — cartons just ended up being a great place for us to start.” But because Clarke is an AI-based system, the more it works, the smarter it gets. Click here to read the full article. The doctors conceived the scenarios. A team of programmers at AiSolve took the medical environment and created an AI-powered virtual world in which students can make decisions and progress or re-evaluate their decisions based upon responses from the virtual patient, virtual medical staff, and other program parameters.

Pediatric emergencies are rare, but by their nature, they are intense. The time pressure is extreme, measured in seconds or minutes. Young surgeons can’t practice these scenarios in real life as often as would be necessary to gain expertise. They usually have to work with mannequins, and the cost can run $430,000 a year for a single hospital to train surgeons on standard scenarios, according to Todd Chang, doctor at CHLA. The cost of the VR system is less than that amount, Shauna Heller, executive director of the project and former developer relations liaison for non-game projects at Oculus, said. “This sort of cutting-edge medical training is where VR shines,” said AiSolve CEO Devi Kolli, in a statement “Working with the project team and CHLA doctors, we harnessed our AI-powered VR simulation tech to closely replicate real-life scenarios in a way simply not possible before. Plus, the AI features let students customize their learning, which strengthens their skills in the long run.” The VR simulation not only reproduces the scenarios, it captures them in a controlled training experience, where performance can be measured and adjustments made. The simulation reproduces the emergency situation. Paramedics rattle off symptoms. Nurses and technicians turn to the participating doctor to make a decision. Distraught parents pray for their child’s survival. It’s like a real Hollywood drama, but visceral and interactive. It prepares the surgeon for a real-life emergency without putting real humans’ lives on the line. Click here to read the full article. “Given digital’s ability to be very quick, we decided to leverage Facebook as a platform because it’s a platform where you get that reach that you’re seeking but you can also be hyper targeted at the same time,” said Ari Ben-Canaan, senior manager of global advertising services at Hershey. “TV production lead times are typically very long and involved whereas digital can be a little bit more agile and you don’t have to be so precious about all the editing schedules and the effects. Digital is an ephemeral environment where people are consuming content and moving on so we’re able to produce something that was really quick, high quality [and] built for an environment where we knew viewers were going to consume it and then probably go on to their social feeds.”

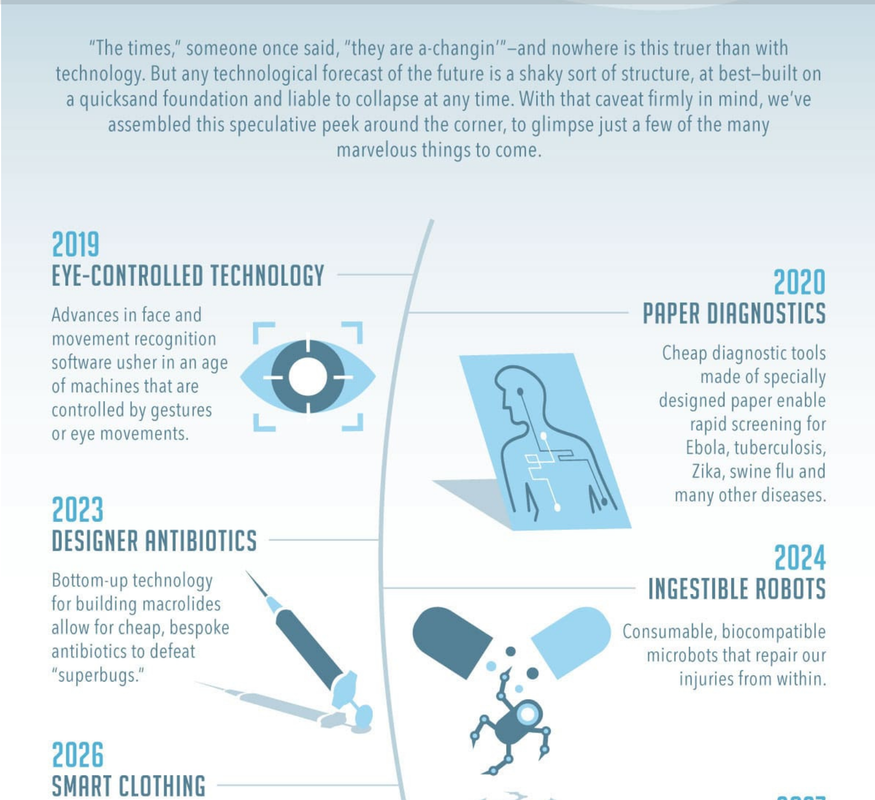

Hershey’s then looked at favorability, brand awareness and ad recall with a Nielsen Brand study that included a sample size of roughly 500. According to Facebook, the campaign lifted favorability by 5 points, brand awareness by 11 points and ad recall by 20 points. Click here to read the full article. Eye controlled technology in 2019, smart clothing by 2026 and wavetop and undersea cities by 2055. Here are some of the most interesting predictions about the future from Scientific American and the National Academy of Sciences.

Click here to see the full infographic. Put aside your doubts. After three years of work, ThyssenKrupp is testing the Multi (an elevator can travel sideways) in a German tower and finalizing the safety certification. This crazy contraption zooms up, down, left, right, and diagonally.

Multi ditches the cables that suspend conventional elevator cars in favor of magnetic levitation, the same technology used in high-speed trains and the proposed HyperLoop. Strong magnets on every Multi car work with a magnetized coil running along the elevator hoistway’s guide rails to make the cars float. Turning these coils on and off creates magnetic fields strong enough to pull the car in various directions. “In past, the industry basically tried to compensate for taller buildings by running a faster car,” Bass says. Rather, Multi increases efficiency by increasing volume. Ditching cables lets ThyssenKrupp stack elevator cars at nearly every floor without overloading the system. When one car blocks another, it can move left or right out of the way. “You can manage a traffic grid like you would a subway,” CEO Patrick Bass says. “We can guarantee a cabin will be at that floor every 30 seconds.” Click here to read the full article. The Chinese Micius Satellite team announced the results of its first experiments. The team created the first satellite-to-ground quantum network, in the process smashing the record for the longest distance over which entanglement has been measured. And they’ve used this quantum network to teleport the first object from the ground to orbit.

Teleportation has become a standard operation in quantum optics labs around the world. The technique relies on the strange phenomenon of entanglement. This occurs when two quantum objects, such as photons, form at the same instant and point in space and so share the same existence. In technical terms, they are described by the same wave function. The curious thing about entanglement is that this shared existence continues even when the photons are separated by vast distances. So a measurement on one immediately influences the state of the other, regardless of the distance between them. This is the first time that any object has been teleported from Earth to orbit, and it smashes the record for the longest distance for entanglement. That’s impressive work that sets the stage for much more ambitious goals in the future. “This work establishes the first ground-to-satellite up-link for faithful and ultra-long-distance quantum teleportation, an essential step toward global-scale quantum internet,” says the team. Click here to read the full article. Google is awarding the Press Association, a large British news agency, $805,000 to build software to automate the writing of 30,000 local stories a month.

The money comes from a fund from Google, the Digital News Initiative, that the search giant started with a commitment to invest over $170 million to support digital innovation in newsrooms across Europe. The Press Assocation received the funding in partnership with Urbs Media, an automation software startup specializing in combing through large open datasets. Together, the Press Assocation and Urbs Media will work on a software project dubbed Radar, which stands for Reporters And Data And Robots. Radar aims to automate local reporting with large public databases from government agencies or local law enforcement — basically roboticizing the work of reporters. Stories from the data will be penned using Natural Language Generation, which converts information gleaned from the data into words. Click here to read the full article. Desktopography projects digital applications—like your calendar, map, or Google Docs—onto a desk where people can pinch, swipe, and tap. Using a depth camera and pocket projector, Robert Xiao, a Carnegie Mellon University computer scientist who leads the Desktopography project, built a small unit that people can screw directly into a standard lightbulb socket.

The depth camera creates a constantly updated 3-D map of the desktop, noting when objects move and when hands enter the scene. This information is then passed along to the rig’s brains, which Xiao's team programmed to distinguish between fingers and, say, a dry erase marker. This distinction is important since Desktopography works like an oversized touchscreen. Xiao designed a few new interactions, like tapping with five fingers to surface an application launcher, or lifting a hand to exit an app. But for the most part, Desktopography applications still rely on tapping, pinching, and swiping. Smartly, the researchers designed a feature that makes digital apps to snap to hard edges on laptops or phones, which could allow projected interfaces to act like an augmentation of physical objects like keyboards. “We want to put the digital and physical in the same environment so we can eventually look at merging these things together in a very intelligent way,” Xiao says. Click here to read the full article. |

A2D Digital FeedAcompanhe as principais novidades sobre transformação digital. Categorias |